Research.

Preprints.

Embracing Discrete Search: A Reasonable Approach to Causal Structure Learning

M Wienöbst, L Henckel, S WeichwaldArgues for revisiting discrete search in causal structure learning,

backed by strong empirical performance across benchmarks. Available in

Python

(pip install flopsearch) and R.

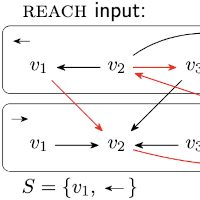

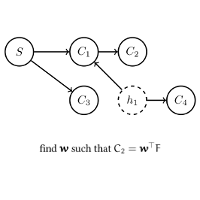

Linear-Time Primitives for Algorithm Development in Graphical Causal Inference

M Wienöbst, S Weichwald, L HenckelCIfly is a declarative framework for graphical causal inference that

reframes many reasoning tasks as reachability queries in purpose-built

graphs, enabling scalable algorithm design. It comes with a fast Rust

core and is available in Python

(pip install ciflypy) and R

(install.packages("ciflyr")).

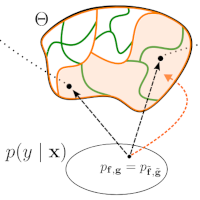

What is causal about causal models and representations?

FH Jørgensen, L Gresele, S WeichwaldWe introduce a formal framework to define the requirements for interpreting actions as interventions in causal models. We show that the natural interpretation of actions as interventions is circular, making falsification based on interventions impossible, and prove an impossibility result: No interpretation can be both non-circular and satisfying a set of natural desiderata.

pdf / arXiv / bib

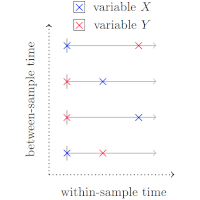

The Case for Time in Causal DAGs

AG Reisach, A Suárez, S Weichwald, A ChambazWe argue that any causal model requires temporal qualification. We formalize a notion of time for causal variables and argue that this resolves existing ambiguity in causal DAGs and is essential to assessing the validity of the acyclicity assumption.

pdf / arXiv / bib

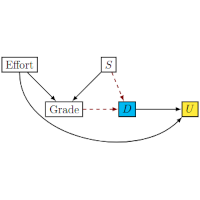

Unfair Utilities and First Steps Towards Improving Them

FH Jørgensen, S Weichwald, J PetersMany fairness criteria constrain the policy or choice of predictors. In this work, we propose to think about fairness from a utility perspective instead: unfair utilities, which incentivize reconstructing the protected attribute even if all and only essential features could be used, should be avoided.

pdf / arXiv / bib

All or None: Identifiable Linear Properties of Next-token Predictors in Language Modeling

E Marconato, S Lachapelle, S Weichwald*, L Gresele*; *Equal contributionaccepted at AISTATS 2025

We prove an identifiability result showing that under suitable conditions, linear properties either hold in all or none distribution-equivalent next-token predictors.

pdf / arXiv / bibPeer-reviewed.

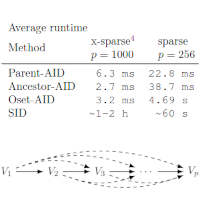

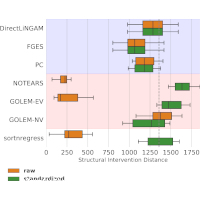

Adjustment Identification Distance: A gadjid for Causal Structure Learning

L Henckel, T Würtzen, S WeichwaldProceedings of the 40th Conference on Uncertainty in Artificial Intelligence (UAI), 2024

We introduce a framework to develop causal distances between graphs

and algorithms to compute them in polynomial time, a first for CPDAGs.

Efficient implementations of adjustment identification distances are

available for Python

(pip install gadjid) and R

(install.packages("gadjid")).

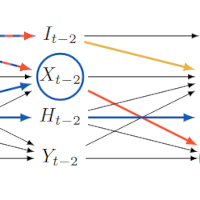

Identifying Causal Effects using Instrumental Time Series: Nuisance IV and Correcting for the Past

N Thams, R Søndergaard, S Weichwald, J PetersJournal of Machine Learning Research, 25(302):1−51, 2024

We provide a structured treatment of instrumental variable (IV) methods in time series data by showing that valid IV models can be identified by representing the time series as a finite graph. We emphasize the need for adjusting for past states of the time series to get valid instruments and develop Nuisance IV, a modified IV estimator.

pdf / JMLR / arXiv / code / bib

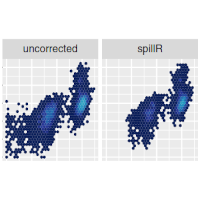

spillR: Spillover Compensation in Mass Cytometry Data

M Guazzini, AG Reisach, S Weichwald, C SeilerBioinformatics, 40(6), 2024

To compensate spillover in mass cytometry, we propose a nonparametric finite mixture model to compensate by transferring spillover distributions from beads to real data.

pdf / doi / bioRxiv / R package / article .Rmd / bib

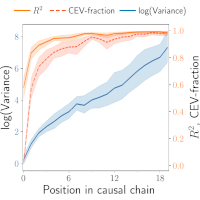

A Scale-Invariant Sorting Criterion to Find a Causal Order in Additive Noise Models

AG Reisach, M Tami, C Seiler, A Chambaz, S WeichwaldAdvances in Neural Information Processing Systems 36 (NeurIPS), 2023

We extend var-sortability (cf. Reisach et al, 2021) to R²-sortability, a pattern in the fraction of variance explained by the other variables, which tends to increase along the causal order and can easily be exploited to learn the causal structure of ANMs even on standardized or rescaled data.

pdf / arXiv / CausalDisco / OpenReview / bib

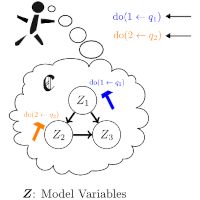

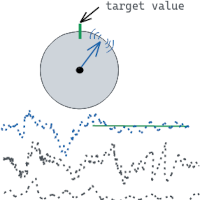

Learning by Doing: Controlling a Dynamical System using Causality, Control, and Reinforcement Learning

S Weichwald, SW Mogensen, TE Lee, D Baumann, O Kroemer, I Guyon, S Trimpe, J Peters, N PfisterProceedings of the NeurIPS 2021 Competition and Demonstration Track, Proceedings of Machine Learning Research, 176:246–258, 2022

Control theory, reinforcement learning, and causality are all ways of mathematically describing how the world changes when we interact with it. Each field offers a different perspective with its own strengths and weaknesses. In this NeurIPS competition, we aimed to bring together researchers from all three fields to encourage cross-disciplinary discussions.

pdf / arXiv / code / Track CHEM / Track ROBO / LBD competition / bib

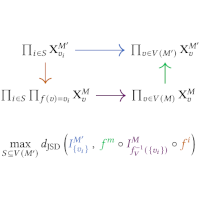

Compositional Abstraction Error and a Category of Causal Models

EF Rischel, S WeichwaldProceedings of the 37th Conference on Uncertainty in Artificial Intelligence (UAI), 2021

We argue that compositionality is a desideratum for causal model transformations and the associated errors. We introduce a category of finite interventional causal models and, leveraging theory of enriched categories, prove that our framework enjoys the desired compositionality properties.

pdf / arXiv / bib

Beware of the Simulated DAG! Causal Discovery Benchmarks May Be Easy To Game

AG Reisach, C Seiler, S WeichwaldAdvances in Neural Information Processing Systems 34 (NeurIPS), 2021

In additive noise models (ANMs), the ordering of variables by marginal variances may be indicative of the causal order. We introduce varsortability as a measure of this agreement between orderings. Since varsortability is high in simulated ANMs, we advocate reporting varsortability when benchmarking.

pdf / arXiv / code / explainer / OpenReview / bib

Improving 1-year mortality prediction in ACS patients using machine learning

S Weichwald, A Candreva*, R Burkholz*, R Klingenberg, L Räber, D Heg, R Manka, B Gencer, F Mach, D Nanchen, N Rodondi, S Windecker, R Laaksonen, SL Hazen, Av Eckardstein, F Ruschitzka, TF Lüscher, JM Buhmann, CM Matter; *Equal contributionEuropean Heart Journal: Acute Cardiovascular Care, 10(8):855–865, 2021

We discuss a model development procedure for small imbalanced data.

pdf / doi / free access / bib

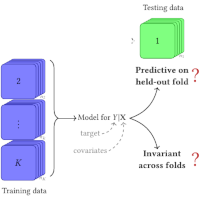

Causality in cognitive neuroscience: concepts, challenges, and distributional robustness

S Weichwald, J PetersJournal of Cognitive Neuroscience, 33(2):226–247, 2021

We outline why we believe that distributional robustness and model generalisability can be useful for guiding causality research in cognitive neuroscience. In particular, it can help with respect to the scarcity of targeted interventional data and the difficulty of defining the right variables. We provide an accessible introduction to causality and review selected causal discovery approaches and their underlying ideas, assumptions, and problems.

pdf / doi / arXiv / bib

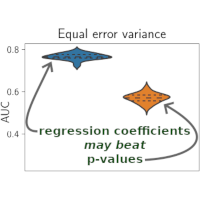

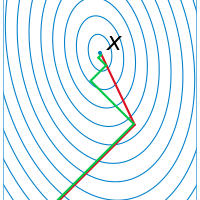

Causal structure learning from time series: Large regression coefficients may predict causal links better in practice than small p-values

S Weichwald, ME Jakobsen, PB Mogensen, L Petersen, N Thams, G VarandoProceedings of the NeurIPS 2019 Competition and Demonstration Track, Proceedings of Machine Learning Research, 123:27−36, 2020

We describe the algorithms for causal structure learning from time series data that won the NeurIPS competition »Causality 4 Climate« 2019. We examine why large regression coefficients may predict causal links better in practice than small p-values and thus why normalising the data may sometimes hinder causal structure learning. The algorithms are available at tidybench.

pdf / arXiv / tidybench / bib

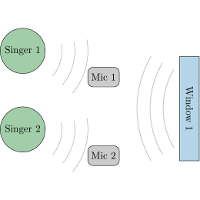

Robustifying Independent Component Analysis by Adjusting for Group-Wise Stationary Noise

N Pfister*, S Weichwald*, P Bühlmann, B Schölkopf; *Equal contributionJournal of Machine Learning Research, 20(147):1−50, 2019

Ideally, causal models of the same system should be consistent with one another in the sense that they agree in their predictions of the effects of interventions. We formalise this notion of consistency in the case of Structural Equation Models (SEMs) by introducing exact transformations between SEMs.

pdf / JMLR / arXiv / Python/R/matlab / audible example / EEG example video / bib

Causal Consistency of Structural Equation Models

PK Rubenstein*, S Weichwald*, S Bongers, JM Mooij, D Janzing, M Grosse-Wentrup, B Schölkopf; *Equal contributionProceedings of the 33rd Conference on Uncertainty in Artificial Intelligence (UAI), 2017

Ideally, causal models of the same system should be consistent with one another in the sense that they agree in their predictions of the effects of interventions. We formalise this notion of consistency in the case of Structural Equation Models (SEMs) by introducing exact transformations between SEMs.

pdf / arXiv / bib

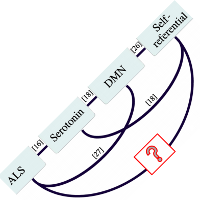

Absence of EEG correlates of self-referential processing depth in ALS

T Fomina, S Weichwald, M Synofzik, J Just, L Schöls, B Schölkopf, M Grosse-WentrupPLoS ONE, 12(6):e0180136, 2017

We find that electroencephalography (EEG) correlates of self-referential thinking are present in healthy individuals, but not in those with ALS. In particular, thinking about themselves or others significantly modulates the bandpower in the medial prefrontal cortex in healthy individuals, but not in ALS patients.

pdf / doi / bib

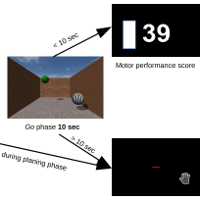

Personalized Brain-Computer Interface Models for Motor Rehabilitation

A Mastakouri, S Weichwald, O Özdenizci, T Meyer, B Schölkopf, M Grosse-WentrupIEEE International Conference on Systems, Man, and Cybernetics (SMC 2017), 2017

We propose to fuse two currently separate research lines on novel therapies for stroke rehabilitation: brain-computer interface (BCI) training and transcranial electrical stimulation (TES).

pdf / doi / arXiv / bib

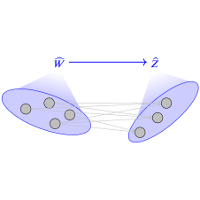

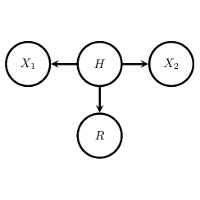

MERLiN: Mixture Effect Recovery in Linear Networks

S Weichwald, M Grosse-Wentrup, A GrettonIEEE Journal of Selected Topics in Signal Processing, 10(7):1254–1266, 2016

MERLiN is a causal inference algorithm that can recover from an observed linear mixture a causal variable that is an effect of another given variable. MERLiN implements a novel idea on how to (re-)construct causal variables and is robust against hidden confounding.

pdf / doi / arXiv / code / bib

Pymanopt: A Python Toolbox for Optimization on Manifolds using Automatic Differentiation

J Townsend, N Koep, S WeichwaldJournal of Machine Learning Research, 17(137):1–5, 2016

Pymanopt lowers the barriers to users wishing to use state of the art

manifold optimization techniques, by using automated differentiation for

calculating derivative information, saving users time and saving them

from potential calculation and implementation errors.

(Example: manifold

optimisation for inferring parameters of a MoG model.)

Recovery of non-linear cause-effect relationships from linearly mixed neuroimaging data

S Weichwald, A Gretton, B Schölkopf, M Grosse-WentrupInternational Workshop on Pattern Recognition in Neuroimaging (PRNI), 2016

This paper proposes an extension of the MERLiN algorithm to identify non-linear cause-effect relationships between linearly mixed neuroimaging data.

pdf / doi / arXiv / code / slides / bib

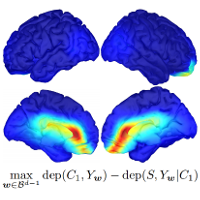

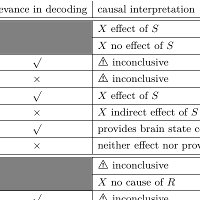

Causal interpretation rules for encoding and decoding models in neuroimaging

S Weichwald, T Meyer, O Özdenizci, B Schölkopf, T Ball, M Grosse-WentrupNeuroImage, 110:48–59, 2015

We provide a set of rules which causal statements are warranted and which ones are not supported by empirical evidence. Only encoding models in the stimulus-based setting support unambiguous causal interpretations. By combining encoding and decoding models, however, we obtain insights into causal relations beyond those that are implied by each individual model type.

pdf / doi / arXiv / explainer / slides / bib

Causal and anti-causal learning in pattern recognition for neuroimaging

S Weichwald, B Schölkopf, T Ball, M Grosse-WentrupInternational Workshop on Pattern Recognition in Neuroimaging (PRNI), 2014

In this paper, we argue that it is not sufficient to distinguish between encoding- and decoding models: The interpretation of such models depends on whether they are employed in a stimulus- or response-based setting.

pdf / doi / arXiv / bib

Decoding index finger position from EEG using random forests

S Weichwald, T Meyer, B Schölkopf, T Ball, M Grosse-Wentrup4th International Workshop on Cognitive Information Processing (CIP), 2014

We show that index finger positions can be differentiated from

non-invasive EEG recordings in healthy human subjects. High β-power

(20–30 Hz) over contralateral sensorimotor cortex carried most

information about finger position.

This work won the best student paper award.

Thesis.

Pragmatism and Variable Transformations in Causal Modelling

S WeichwaldETH Zurich, 2019

The statistical treatment of causal modelling lays out methodology that, under well specified assumptions, enables us to infer cause-effect relationships from observational data. The adoption and fruitful utilisation of such methods remains limited, however, despite the statistical foundations and numerous theoretical advances. In this thesis, we present contributions towards closing the gap between statistical causal modelling and its successful application.

pdf / doi / bibOther.

What is Cantor’s continuum problem?

S Weichwald2013

This seminar paper reviews Kurt Gödel’s article »What is Cantor’s continuum problem?«. As this paper aims to be almost self-contained, short recaps, rough explanations and selective examples are provided where appropriate.

pdfSoftware.

- CausalDisco 🪩

-

Python toolbox offering various SortnRegress baseline algorithms for

causal discovery and R²- and Var-sortability diagnostic tools to assess

discovery tasks.

code / documentation / - coroICA.

-

Confounding-robust Independent Component Analysis.

Python / R / matlab / documentation //

- gadjid.

-

Adjustment identification distances to compare DAGs and CPDAGs.

code / - Pymanopt.

-

Python toolbox for optimisation on manifolds with support for automatic

differentiation.

Part of the manopt family.

code / documentation / - MERLiN non- & linear.

-

Mixture Effect Recovery in Linear Networks.

code - tidybench.

-

TIme series DiscoverY BENCHmark implementation of our

competition-winning algorithms.

code